Howdy folks! Long time no write.

In this post, I will be answering a couple of questions from the previous posts about dropping columns.

A quick refresh

If you haven’t read the previous posts on this topic, here is the list:

Howdy folks! Long time no write.

In this post, I will be answering a couple of questions from the previous posts about dropping columns.

If you haven’t read the previous posts on this topic, here is the list:

In my last article - Identify Tables With Dropped Columns

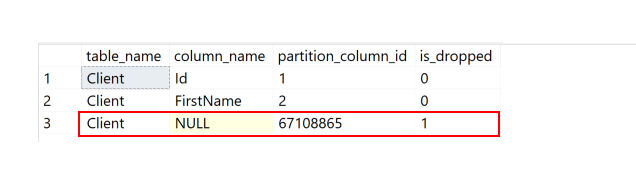

- we saw how we can identify tables that have columns that were dropped.

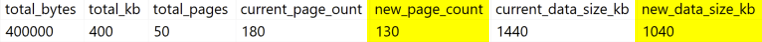

Today, we are going to check a way to approximately calculate how much space we can expect to recover if we rebuild our table.

If you want to read the 1st article I published on this subject, you can read it here - What happens when we drop a column on a SQL Server table? Where’s my space?

On my last article - What happens when we drop a column on a SQL Server table? Where’s my space?

- I have shown what happens when we drop a column from a table.

Today, we are going to check if we have a way to find tables with dropped columns.

As we learnt, when we drop a column this is just a metadata operation and won’t clear the space that was being used. You may be asking - Do I have more tables with dropped columns that I’m not aware of? - And that is a legit question.

Let’s see how we can achieve this.

This article was initially posted on SQLServerCentral @ 2024-04-26.

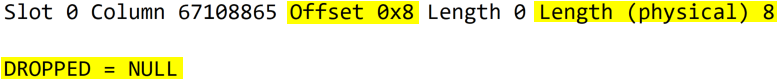

Short answer: The column is marked as ‘deleted’ and will stop being visible/usable.

But, most importantly - The record/table size will remain unchanged.

Dropping a column is a metadata/logical operation, not a physical one. This means that the data isn’t removed/overwritten by this action.

If we were talking about deleting data (records), as Paul Randal mentions here :

A couple of days ago I was doing some cleaning on some Azure SQL DBs and shrinking some files to lower the bill.

To check my progress I needed to check the size before/after the task in an easy way for multiple Azure SQL DBs.

If you need to check the storage space being used by 1 Azure SQL DB, that is as easy as jumping on the portal, select the desired database and you can find the information in the Overview. This is shown below.